Seeing 3D chairs: exemplar part-based 2D-3D alignment

using a large dataset of CAD models

Abstract

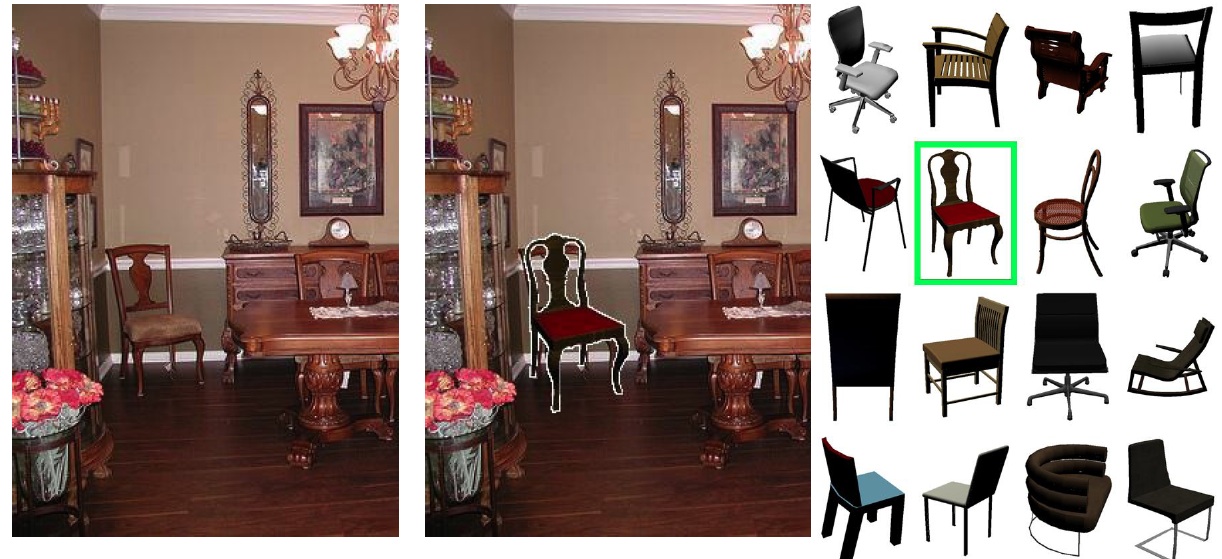

This paper poses object category detection in images as a type of 2D to 3D alignment problem, utilizing the large quantities of 3D CAD models that have been made publicly available on-line. Using the “chair” class as a running example, we propose an exemplar-based 3D category representation, which can explicitly model chairs of different styles as well as the large variation in viewpoint. We develop an approach to establish part-based correspondences between 3D CAD models and real photographs. This is achieved by (i) representing each 3D model using a set of view-dependent mid-level visual elements learned from synthesized views in a discriminative fashion, (ii) carefully calibrating the individual element detectors with respect to each other on a common dataset of negative images, and (iii) matching them to the test image allowing for small mutual deformations but preserving the viewpoint and style constraints. We demonstrate the ability of our system to align 3D models with 2D objects on the challenging PASCAL VOC images, which depict a wide variety of chairs in complex scenes.

Publication

Seeing 3D chairs: exemplar part-based 2D-3D alignment using a large dataset of CAD models

M. Aubry, D. Maturana, A. Efros, B. Russell and J. Sivic

CVPR, 2014

Download pdf | bibtex

Video

Presentation

You can download our CVPR presentation in

PDF (5M) and

PPTX (31M)

Supplementary material

You can see more results from our algorithm

here

Code

The code of the algorithm for discriminative elements selection and matching is available on

github.com/mathieuaubry/seeing3Dchairs/. The code for rendering views of the model, implemented by Daniel Maturana is available

github.com/dimatura/seeing3d.

Data

You can download the training 3D models from 3D Warehouse using the urls provided in

this file or directly the training chair images

here (3GB).

Copyright Notice

The documents contained in these directories are included by the contributing authors as a means to ensure timely dissemination of scholarly and technical work on a non-commercial basis. Copyright and all rights therein are maintained by the authors or by other copyright holders, notwithstanding that they have offered their works here electronically. It is understood that all persons copying this information will adhere to the terms and constraints invoked by each author's copyright.