Weakly-supervised learning of visual relations

Abstract

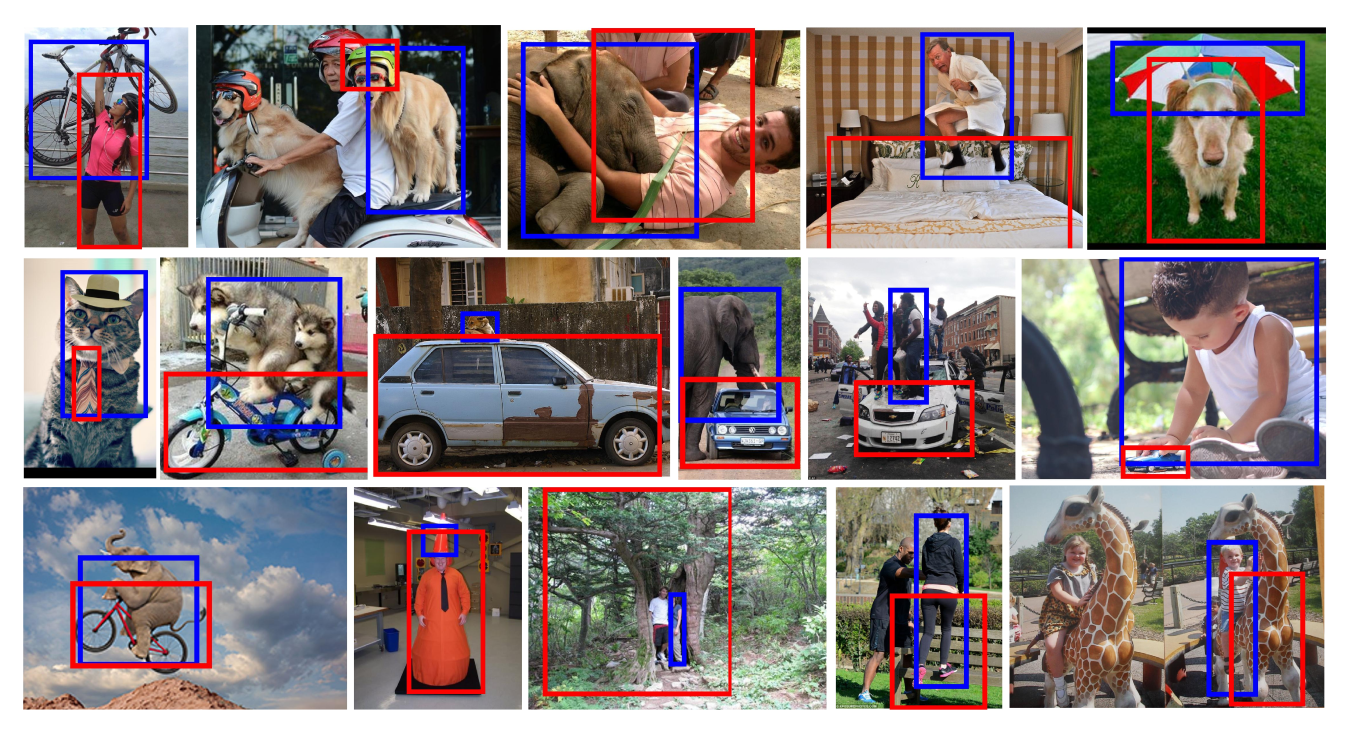

This paper introduces a novel approach for modeling visual relations between pairs of objects. We call relation a triplet of the form (subject, predicate, object) where the predicate is typically a preposition (eg. 'under', 'in front of') or a verb ('hold', 'ride') that links a pair of objects (subject, object). Learning such relations is challenging as the objects have different spatial configurations and appearances depending on the relation in which they occur. Another major challenge comes from the difficulty to get annotations, especially at box-level, for all possible triplets, which makes both learning and evaluation difficult. The contributions of this paper are threefold. First, we design strong yet flexible visual features that encode the appearance and spatial configuration for pairs of objects. Second, we propose a weakly-supervised discriminative clustering model to learn relations from image-level labels only. Third we introduce a new challenging dataset of unusual relations (UnRel) together with an exhaustive annotation, that enables accurate evaluation of visual relation retrieval. We show experimentally that our model results in state-of-the-art results on the visual relationship dataset significantly improving performance on previously unseen relations (zero-shot learning), and confirm this observation on our newly introduced UnRel dataset.

Paper

[paper] [arXiv] [slides] [poster]

BibTeX

@InProceedings{Peyre17,

author = "Peyre, Julia and Laptev, Ivan and Schmid, Cordelia and Sivic, Josef",

title = "Weakly-supervised learning of visual relations",

booktitle = "ICCV",

year = "2017"

}

Talk given at ICCV17

UnRel Dataset

The full archive containing UnRel images and annotations can be dowloaded here:

Code

To run the code, first clone the github project : Then load pre-computed features :Acknowledgements

This work was partly supported by ERC grants Activia (no. 307574), LEAP (no. 336845) and Allegro (no. 320559), CIFAR Learning in Machines & Brains program and ESIF, OP Research, development and education Project IMPACT No. CZ.02.1.01/0.0/0.0/15 003/0000468.