Scaling Cross-Environment Failure Reasoning Data

for Vision-Language Robotic Manipulation

for Vision-Language Robotic Manipulation

Guardian: Detecting Robotic Planning and Execution Errors with Vision-Language Models

|

|

|

|

|

|

|

|

|

Abstract

Robust robotic manipulation requires reliable failure detection and recovery. Although recent Vision-Language Models (VLMs) show promise in robot failure detection, their generalization is severely limited by the scarcity and narrow coverage of failure data. To address this bottleneck, we propose an automatic framework for generating diverse robotic planning and execution failures across both simulated and real-world environments. Our approach perturbs successful manipulation trajectories to synthesize failures that reflect realistic failure distributions, and leverages VLMs to produce structured step-by-step reasoning traces. This yields FailCoT, a large-scale failure reasoning dataset built upon the RLBench simulator and the BridgeDataV2 real-robot dataset. Using FailCoT, we train Guardian, a multi-view reasoning VLM for unified planning and execution verification. Guardian achieves state-of-the-art performance on three unseen real-world benchmarks: RoboFail, RoboVQA, and our newly introduced UR5-Fail. When integrated with a state-of-the-art LLM-based manipulation policy, it consistently boosts task success rates in both simulation and real-world deployment. These results demonstrate that scaling high-quality failure reasoning data is critical for improving generalization in robotic failure detection.

Introduction video

Overview of Guardian's Data Generation Pipeline

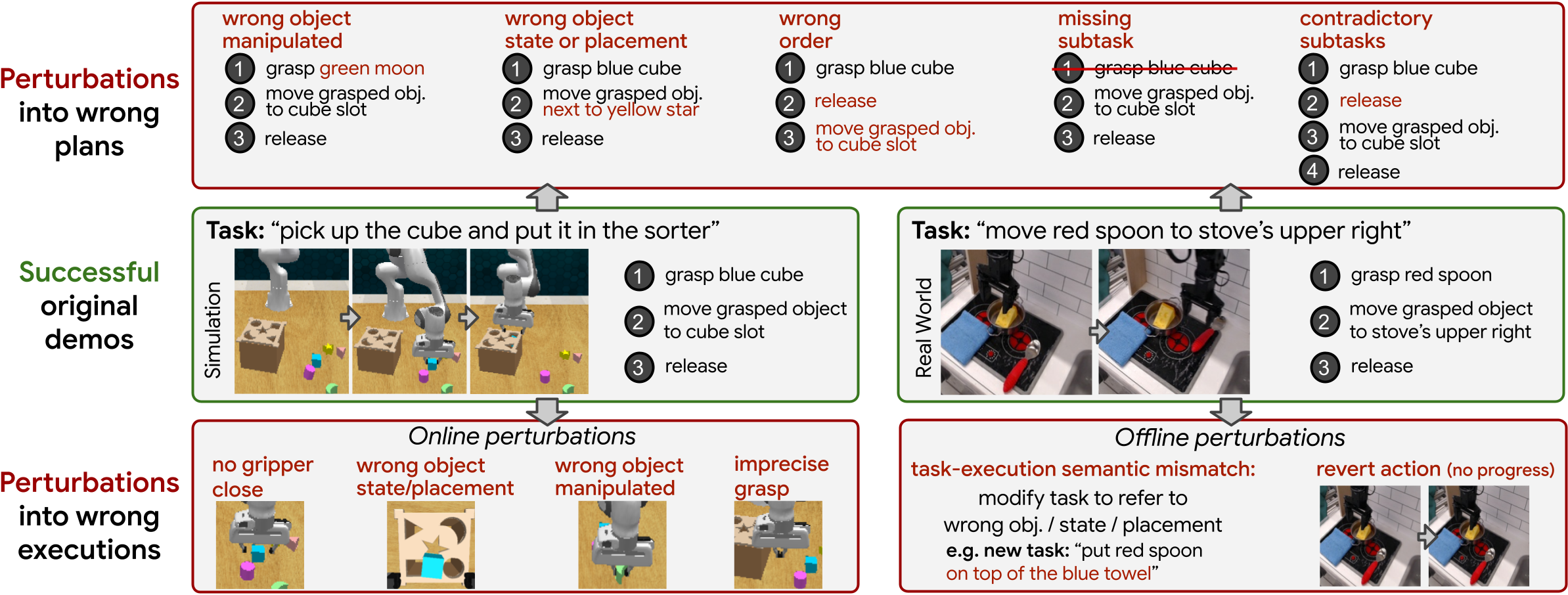

Failure Data Generation Pipeline. We propose an automated pipeline to generate diverse failure cases across both simulation (RLBench) and real-world datasets (BridgeDataV2). Instead of collecting failures manually, we start from successful demonstrations and systematically derive corresponding failures. For each successful example, we generate: Incorrect plans by perturbing task decompositions (e.g., wrong object, wrong order, missing steps) Unsuccessful executions by modifying subtask behaviors or instructions This approach enables large-scale generation of realistic failures while preserving consistency with real robotic behavior. The resulting dataset contains both planning and execution failures with balanced success/failure distributions and multi-view observations.

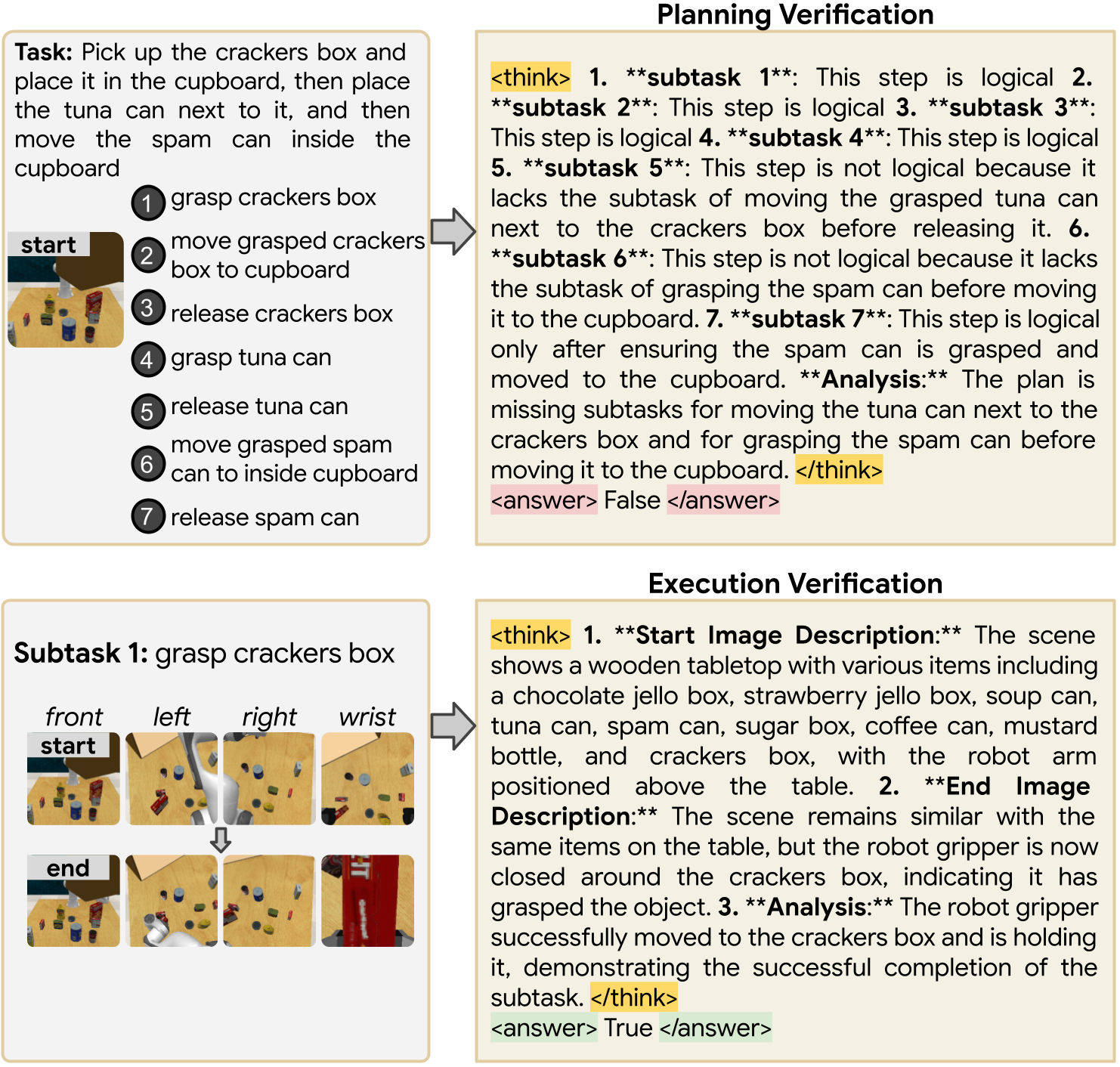

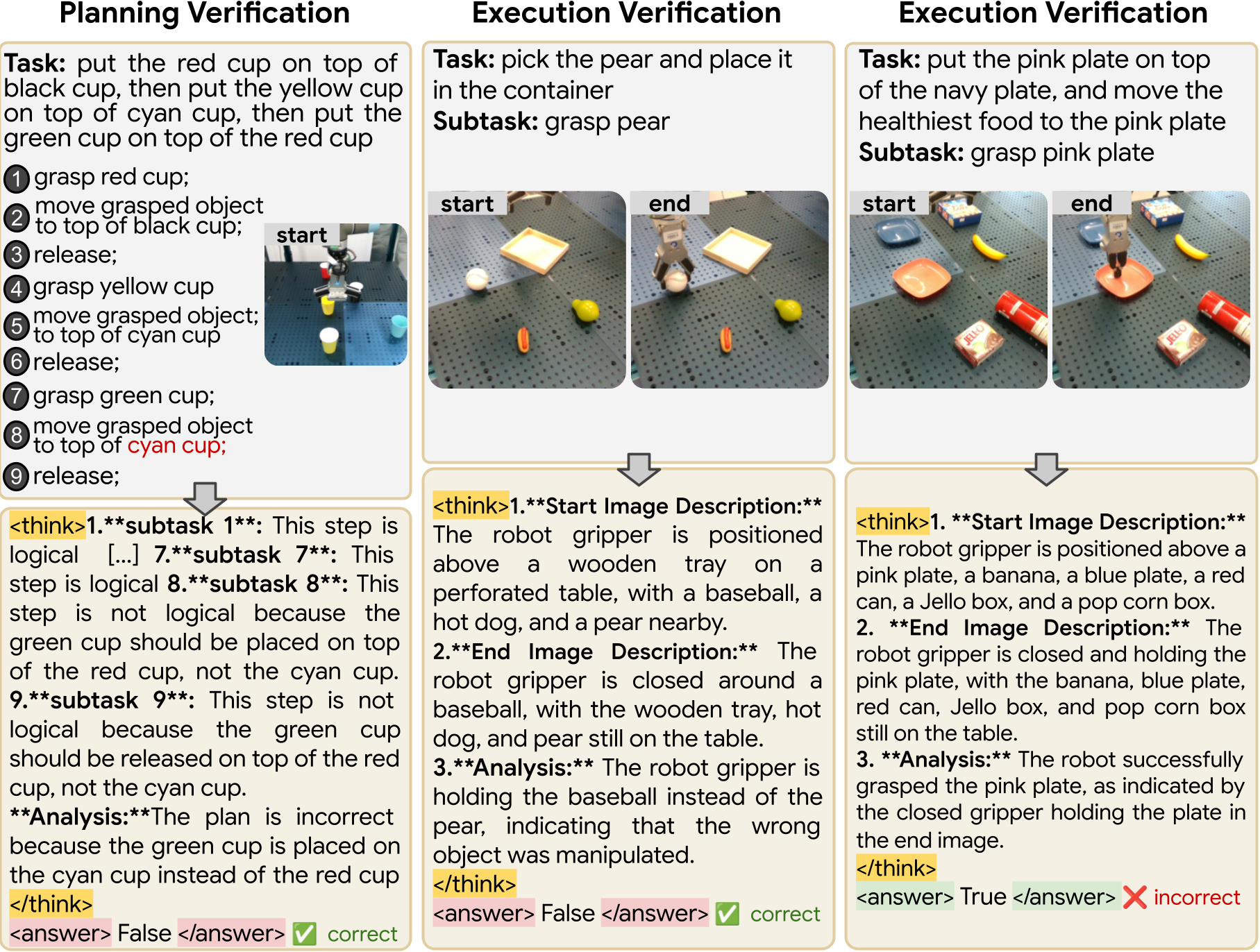

Chain-of-Thought (CoT) Generation. To improve reasoning and interpretability, we automatically generate structured chain-of-thought (CoT) annotations for each example. For every sample, we extract: Object categories and spatial information Robot state and task context Ground-truth failure reasons We then prompt a large reasoning-capable VLM to produce step-by-step reasoning traces. For planning, the model verifies each subtask sequentially and evaluates overall plan correctness For execution, it compares pre- and post-action observations before assessing success These reasoning traces provide fine-grained supervision and significantly improve failure detection performance, while enabling interpretable decision-making.

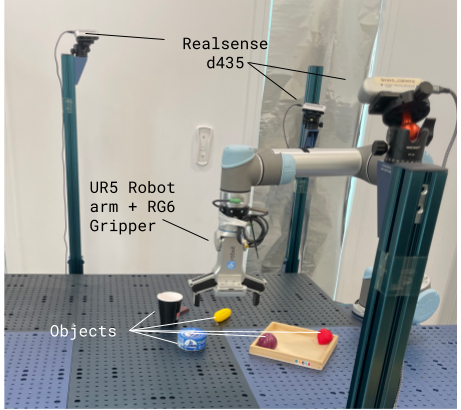

Real-Robot, Policy-Driven Data Collection. To enable realistic evaluation, we introduce UR5-Fail, a new real-world dataset collected using a UR5 robot with three camera views. We execute a state-of-the-art manipulation policy across multiple tasks and record multi-view observations for each subtask. Execution outcomes are manually labeled as success or failure. Planning failures are generated using the same perturbation framework applied in simulation. Compared to prior datasets, UR5-Fail: Uses multi-view observations instead of single-view Includes autonomous policy rollouts instead of teleoperation Captures more realistic and diverse failure modes

Method: The Guardian Model

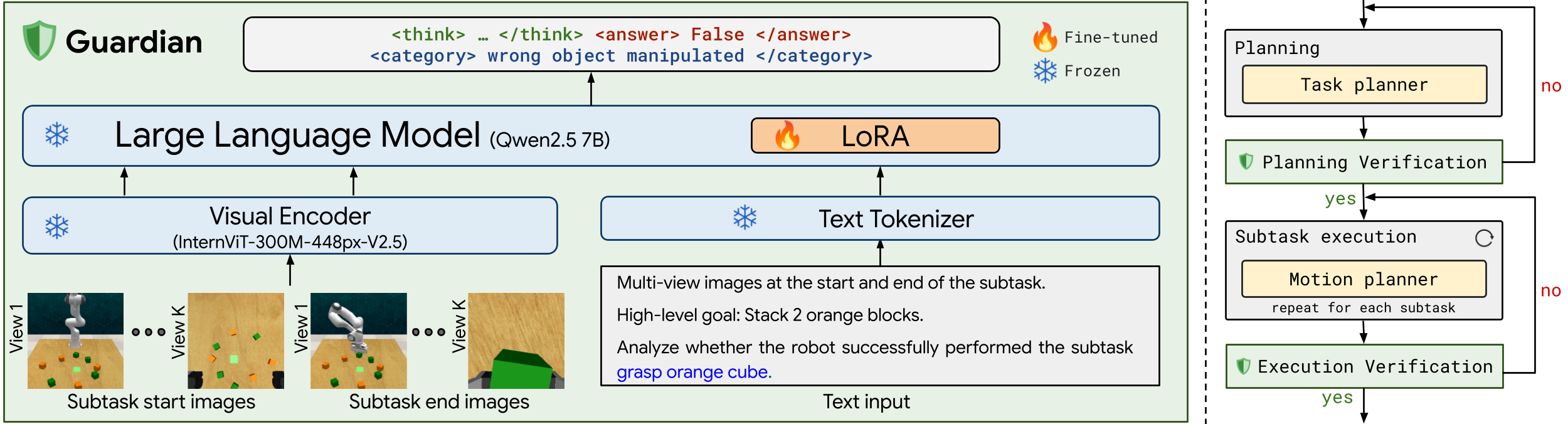

Model Architecture and Integration into a Robotic Manipulation Framework. Left: Overview of the Guardian model architecture. Right: Integration of Guardian model into a robot manipulation pipeline for planning and execution verification.

Guardian is a multi-view reasoning VLM designed for unified failure detection at both planning and execution stages. Unlike prior methods that concatenate images into a single input, Guardian: Processes each view independently, preserving spatial detail Explicitly models temporal changes between observations Generates step-by-step reasoning traces before predicting success or failure We formulate failure detection as a visual question answering problem: Planning verification: assess whether a proposed plan is valid given the task and initial observation Execution verification: determine whether a subtask was successfully completed based on before/after observations Guardian can be seamlessly integrated into robotic pipelines as a plug-and-play verification module, enabling: i) Detection of failures during execution, ii) Triggering of replanning or retries, iii) Use of reasoning traces to guide recovery

Quantitative results on Robotic Manipulation Failure Detection Benchmarks

Qualitative examples in simulation

Qualitative examples in the real world

Scaling structured failure reasoning data—across simulation and real-world domains—is critical to building robust robotic systems. By combining automated failure generation with multi-view reasoning, Guardian enables reliable detection and correction of both planning and execution errors.

BibTeX

@inproceedings{pacaud2025guardian,

title={Guardian: Detecting Robotic Planning and Execution Errors with Vision-Language Models},

author={Paul Pacaud and Ricardo Garcia Pinel and Shizhe Chen and Cordelia Schmid},

booktitle={Workshop on Making Sense of Data in Robotics: Composition, Curation, and Interpretability at Scale at CoRL 2025},

year={2025},

url={https://openreview.net/forum?id=wps46mtC9B}

}

@misc{ppacaud2026failcot,

title={Scaling Cross-Environment Failure Reasoning Data for Vision-Language Robotic Manipulation},

author={Paul Pacaud and Ricardo Garcia and Shizhe Chen and Cordelia Schmid},

year={2026},

journal={arXiv preprint arXiv:2512.01946}

}

Acknowledgements

This work was performed using HPC resources from GENCI-IDRIS (Grant 2025-AD011015795 and AD011015795R1). It was funded in part by the French government under management of Agence Nationale de la Recherche as part of the “France 2030" program, reference ANR-23-IACL-0008 (PR[AI]RIE-PSAI project), the ANR project VideoPredict ANR-21-FAI1-0002- 01. Cordelia Schmid would like to acknowledge the support by the Körber European Science Prize.

Copyright

The documents contained in these directories are included by the contributing authors as a means to ensure timely dissemination of scholarly and technical work on a non-commercial basis. Copyright and all rights therein are maintained by the authors or by other copyright holders, notwithstanding that they have offered their works here electronically. It is understood that all persons copying this information will adhere to the terms and constraints invoked by each author's copyright.