Available now! Annotated data

Coming soon! Code

Overview

In this project, we tackle the problem of automatically finding facial attributes in video. In this work, we focus on static attributes that do not change over the course of a track. We want to minimize supervision, and we do so by using unlabeled video to improve generalization.

We begin by training our classifier from still images. Using still images provides several advantages over using video. There is wide variation across people; there is a large amount of freely available labeled data from the web; and images tend to be higher quality (higher resolution, less motion blur) than still frames from video. This can be seen in the top line of the figure to the left, which consists of images from the FaceTracer still image data set.

However, in still image data, there is little variation across viewpoint, expression, or pose. Moreover, we want our algorithms to work on video, so using training data from the same domain could be helpful. The bottom four rows of the image illustrate the range of difference within one track of video data. Expression, pose, and lighting can all change quite dramatically over the course of short track.

Method

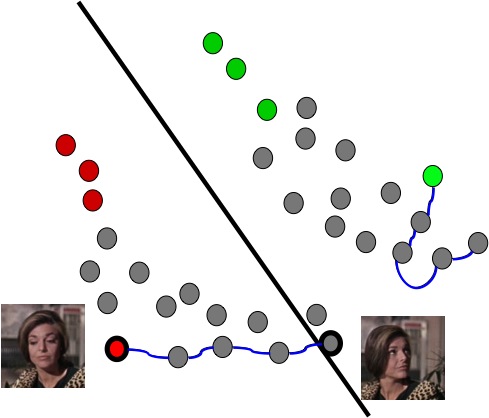

We combine a small number of labeled still images as training data with a large number of unlabeled video frames and use this combination to train our classifier. Our algorithm, illustrated below, works by first classifying all the unlabeled video tracks using the labeled image data. The inferred annotation gives us several highly confident faces.

-

Initial classifier

-

Label most confident faces

-

Inferred annotation

-

Use video tracks to propagate

inferred annotations

-

Re-train classifer with inferred

and propagated data -

More general classifier

Many attributes do not change over the course of a track (gender, age, race). Therefore, we are free to use the harder examples from the track to aid our classification. We add in both the maximally confident face in a track and the minimally confident one. The hard examples tend to be those with different poses or expressions, exactly the kind that are most dissimilar from the still image data. This helps the classifier be more general.

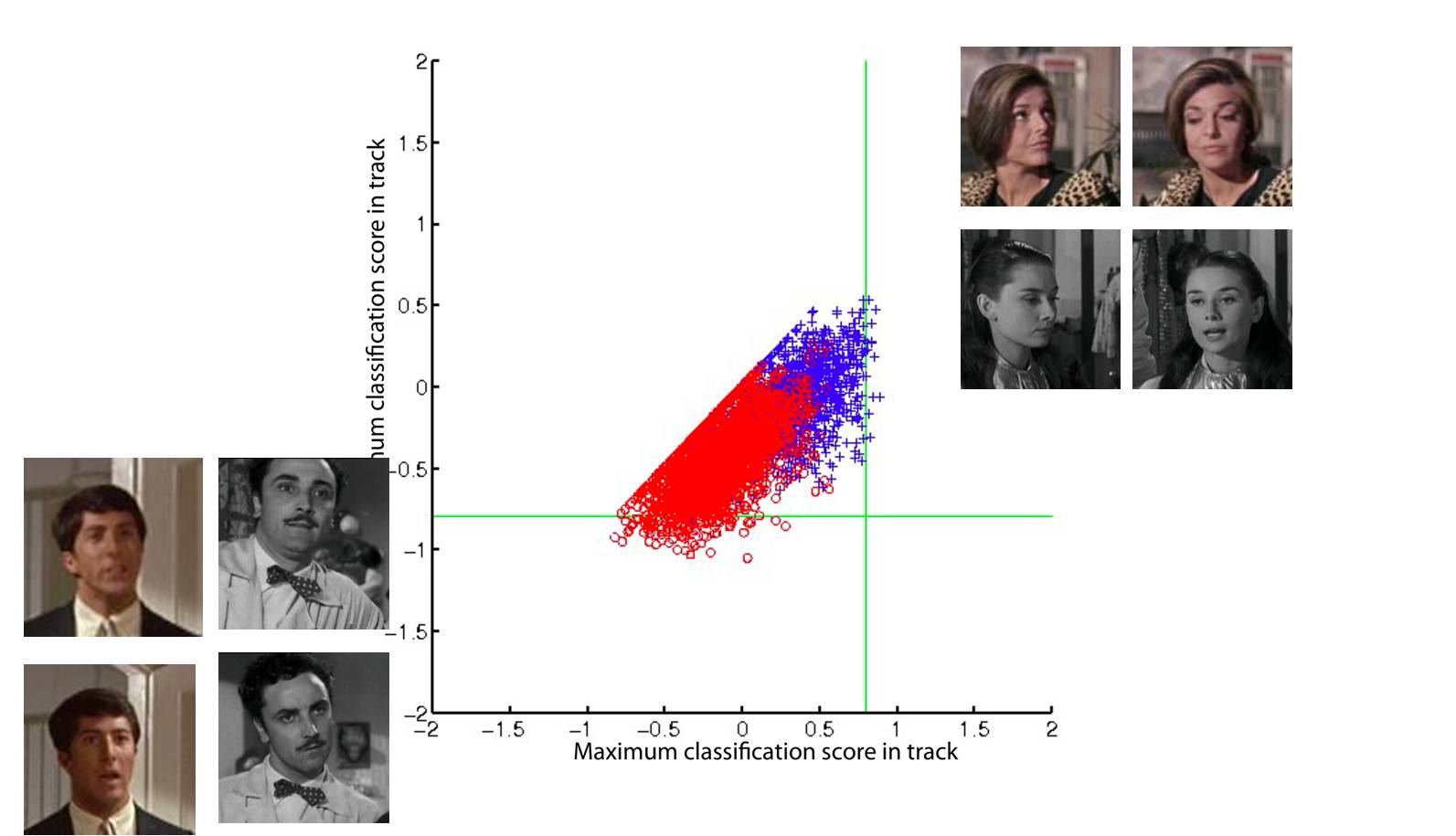

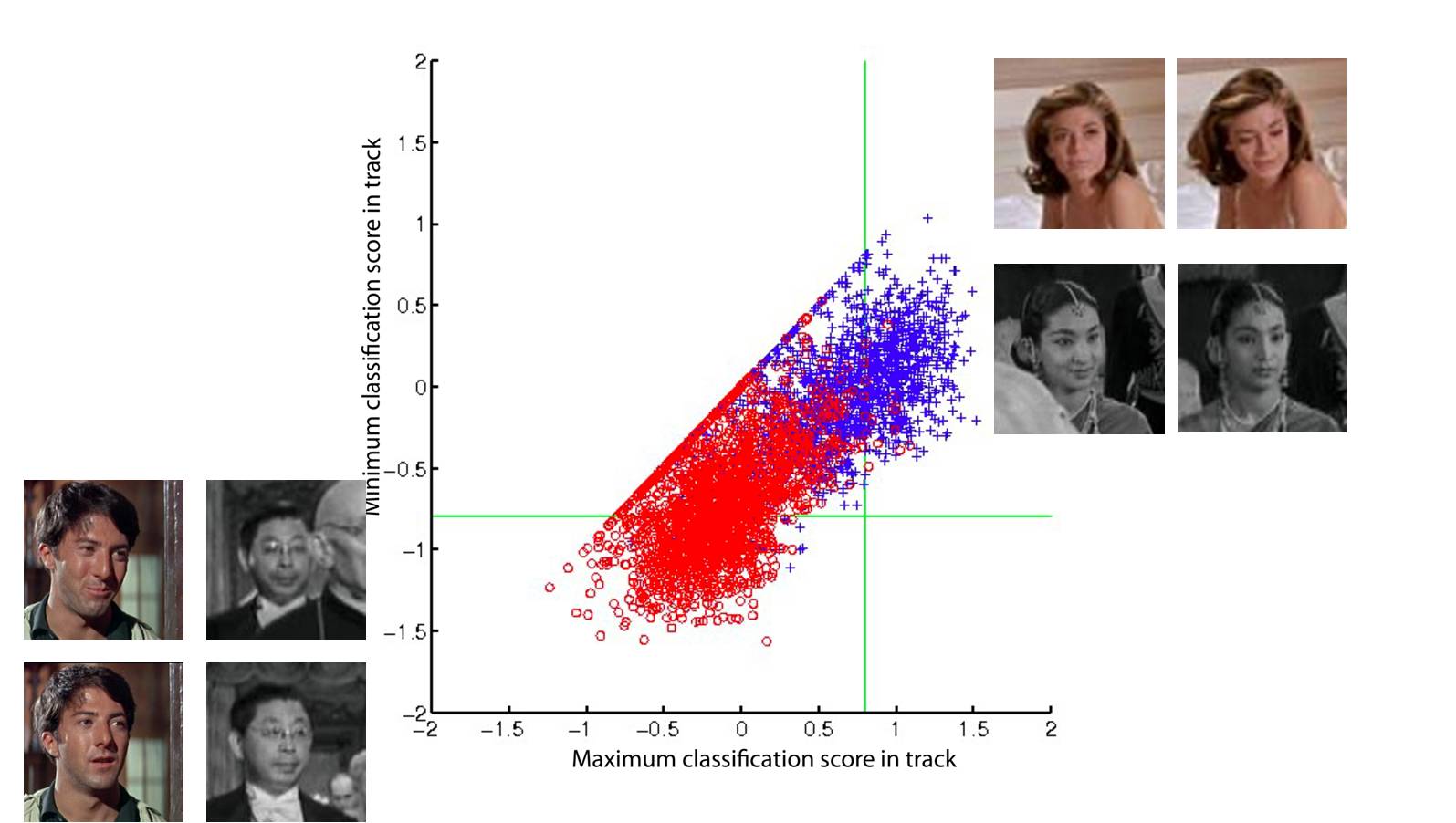

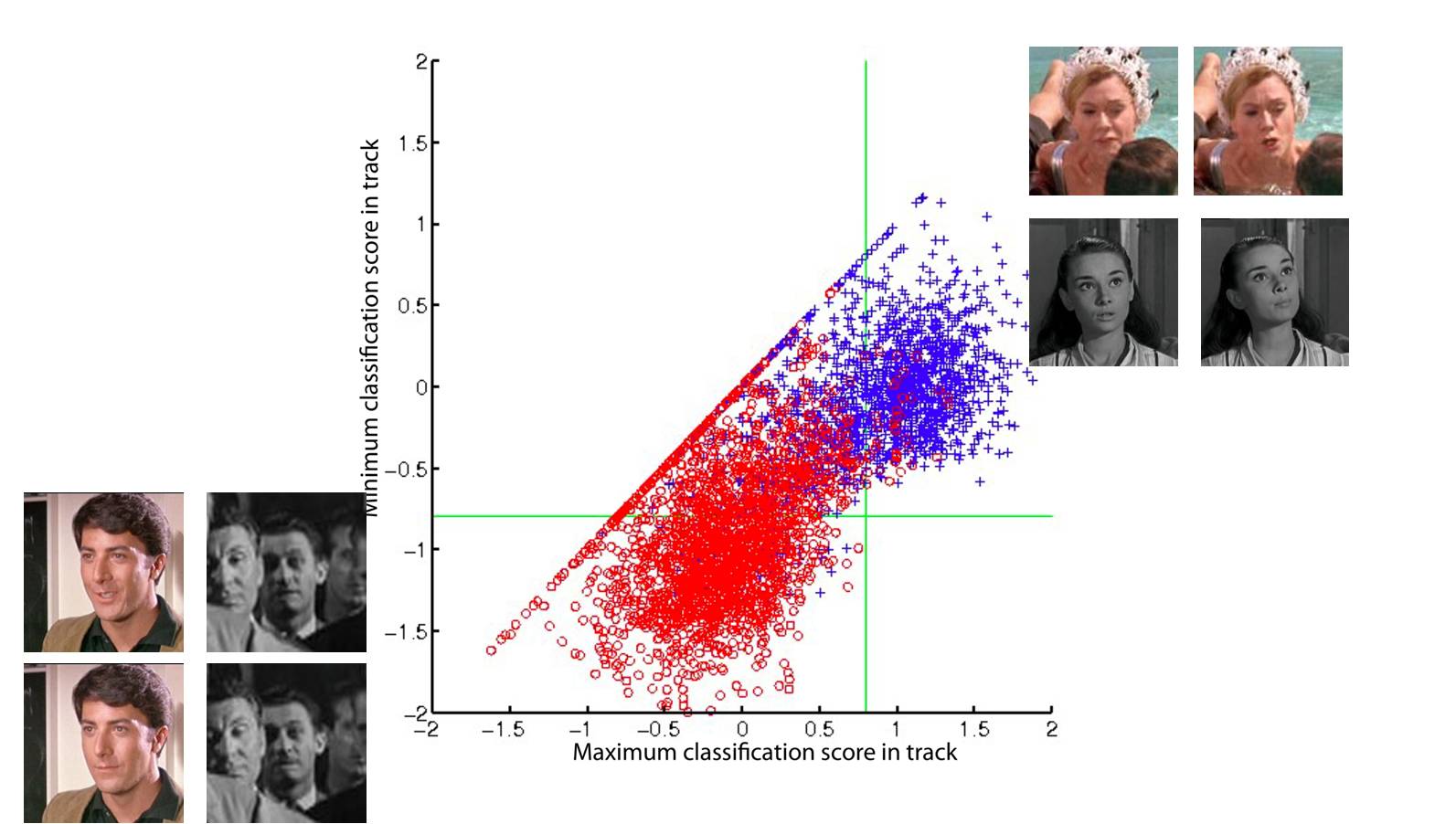

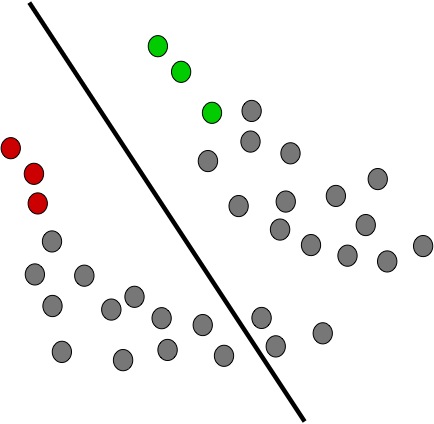

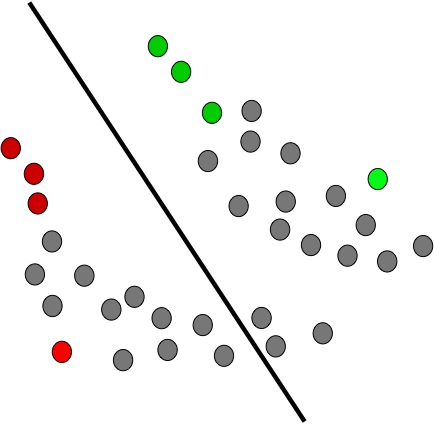

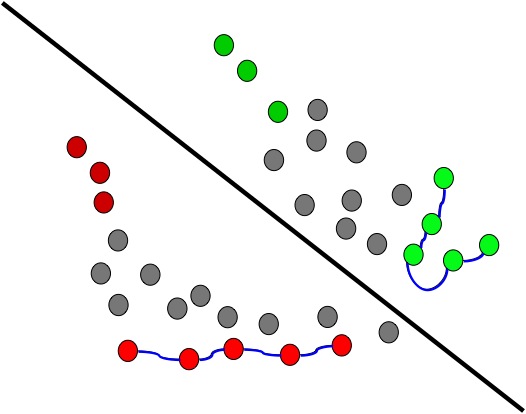

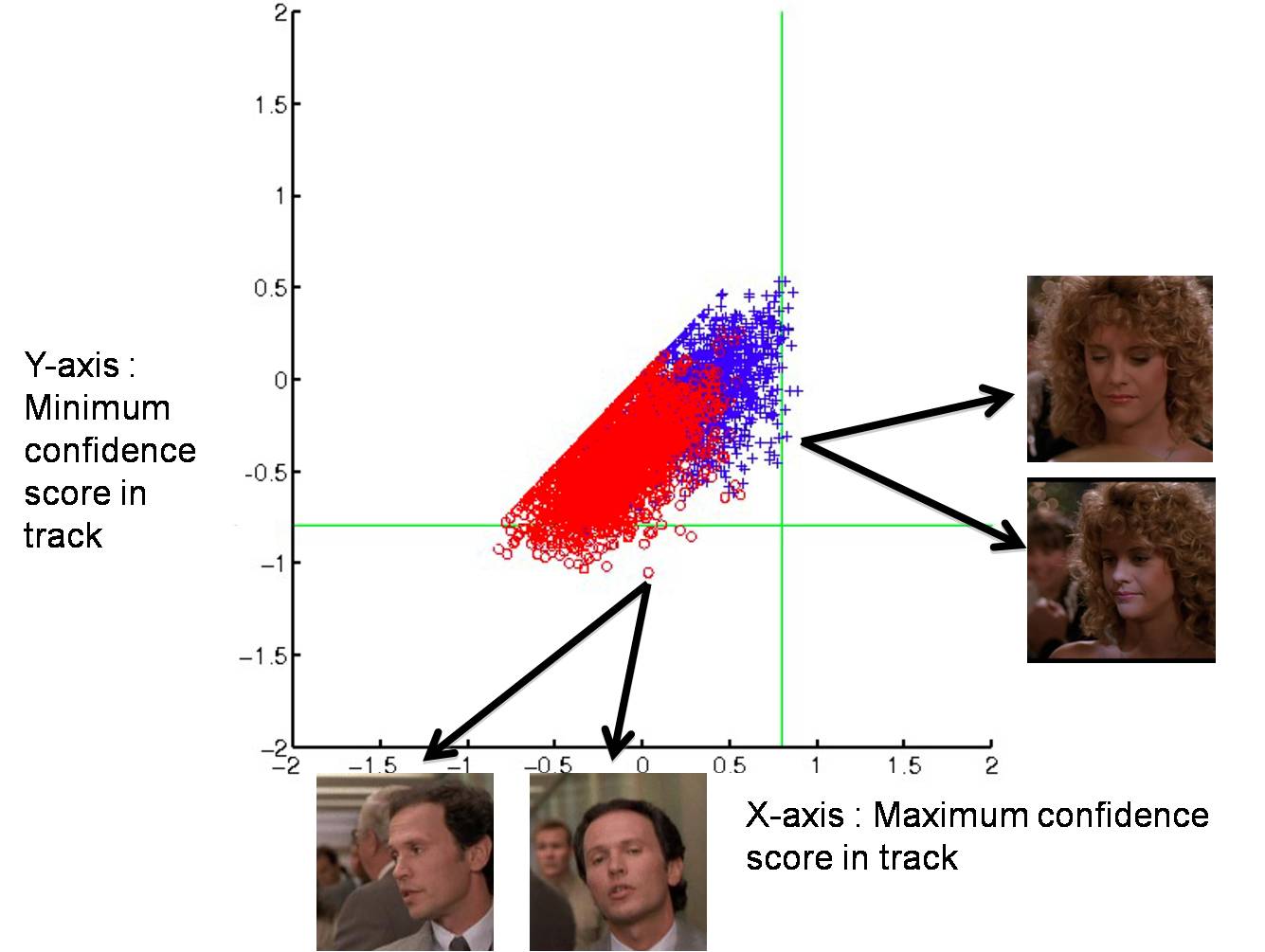

In the figure below, we show the progression of the algorithm as we add more video data. Each point represents a track. On the x-axis is the maximum confidence score for the classifier, and on the y-axis is the minimum confidence score. This score is in relation to our current classifier. The red circles are male tracks and the blue crosses are female tracks. Keep in mind that we do not actually have access to these ground truth labels; this is for illustrative purposes only.

-

Min-max faces. Each point represents a track, and the minimum confidence score within the track is plotted against the maximum confidence score.

The points that we're most interested in lie in the lower right hand corner. These are tracks that have one face which is strongly confident and one face which is weakly confident (and might even pass the hyperplane).

Our algorithm iteratively adds minimum and maximum faces from tracks that contain one highly confident face and one less confident face. This results in a gradual separation of the data as we force the classifier to generalize.

Results

We used the FaceTracer [1] still image data set as our initial training set and five unlabeled movies from five different time periods (The Graduate, Roman Holiday, When Harry Met Sally, Desperately Seeking Susan, and Insomnia). Our test set was the movie Love, Actually.

We detected faces using Viola-Jones and tracked them using a modification of KLT tracking first described in Everingham et al. [2]. The features are local image descriptors around facial feature points and the distance measure is an approximation of the intersection kernel by Maji and Berg [3].

We evaluated our algorithm on the attributes gender and age. Age in FaceTracer is defined by 5 classes: baby, child, youth, middle-aged, and senior. We grouped baby, child, and youth into one class young and middle-aged and senior into a second class old.

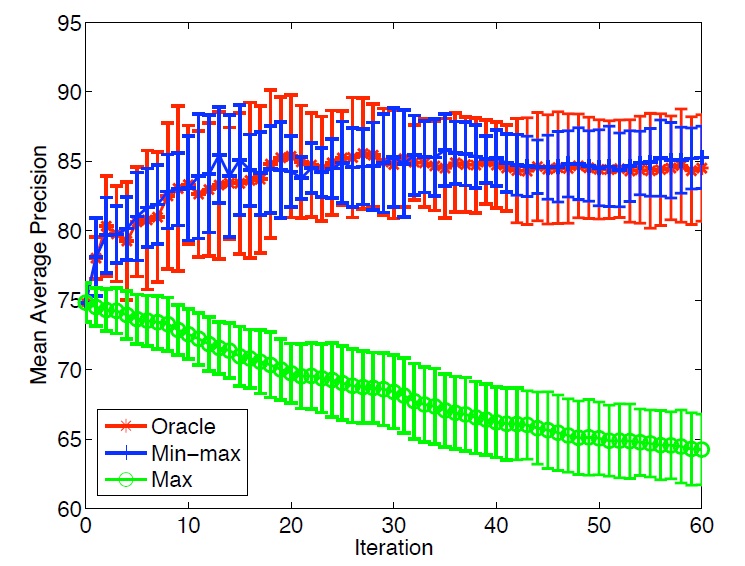

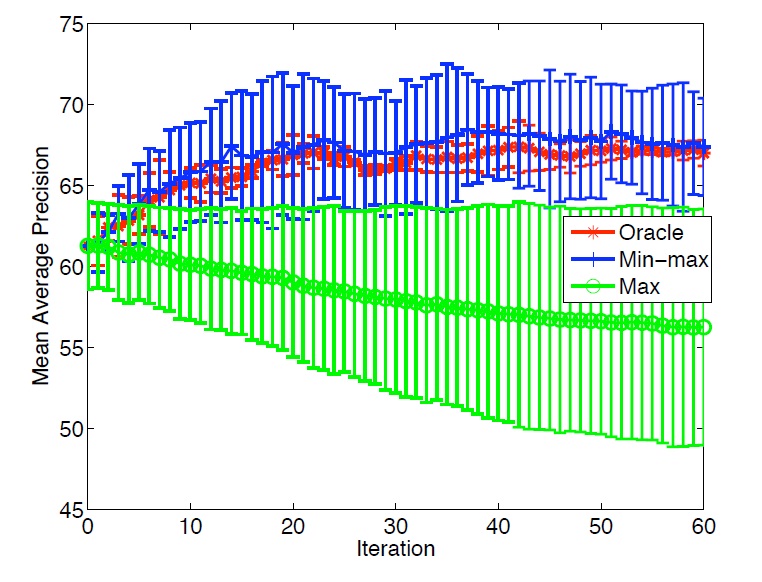

We compare our algorithm "Min-max" with an 'oracle' version of the same algorithm, in which we ignore incorrectly labeled faces, and with the algorithm that just takes the maximum face. The figure below shows how the mean average precision rate progresses over each iteration. In both the case of gender and of age we improve, but the improvement is more substantial for gender.

Video results

For further details, please see our paper from the Parts and Attributes workshop at ECCV 2010.

[1] Kumar, N., Belhumeur, P.N., Nayar, S.K.: Face Tracer: A search engine for large collections of images with faces. In: Proc. European Conference on Computer Vision. (2008)

[2] Everingham, M., Sivic, J., Zisserman, A.: "Hello! My name is... Buffy" - automatic naming of characters in tv video. In: Proc. British Machine Vision Conference. (2006)

[3] Maji, S., Berg, A.: Max-margin additive models for detection. In: Proc. International Conference on Computer Vision. (2009)