ContextLocNet: Context-aware Deep Network Models

for Weakly Supervised Localization

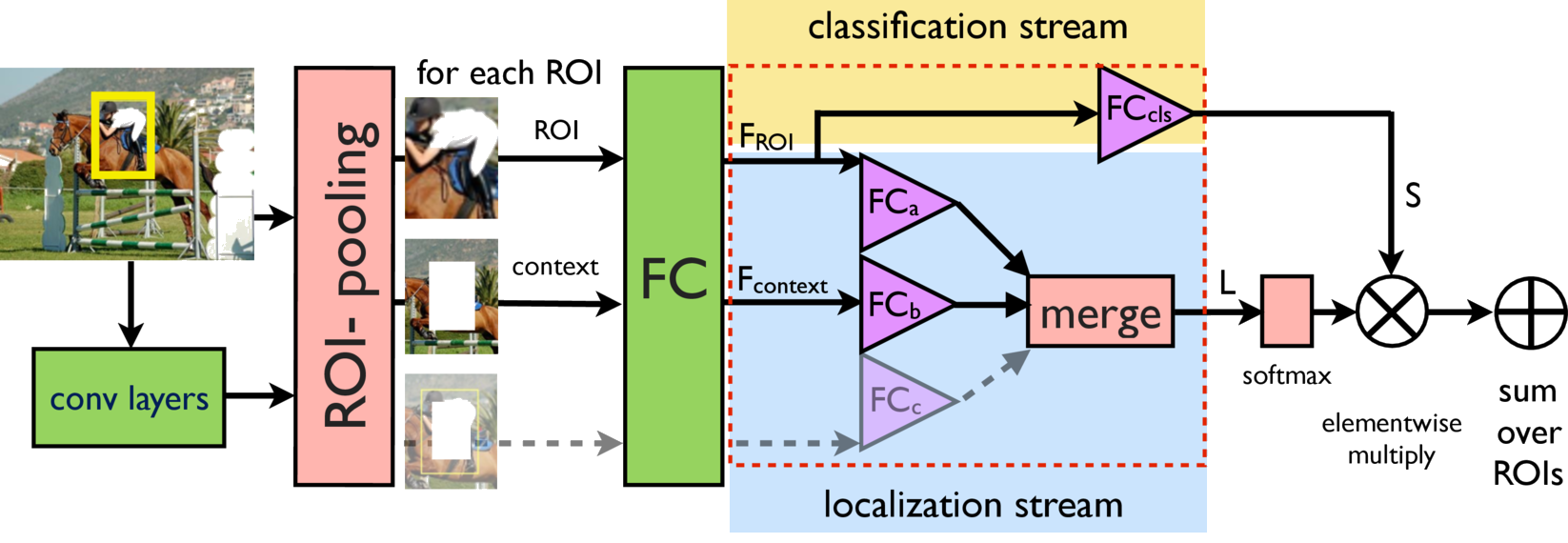

ContextLocNet improves localization by comparing an object score between a proposal and its context.

@inproceedings{kantorov2016,

title = {ContextLocNet: Context-aware Deep Network Models for Weakly Supervised Localization},

author = {Kantorov, V., Oquab, M., Cho M. and Laptev, I.},

booktitle = {Proc. European Conference on Computer Vision (ECCV), IEEE, 2016},

year = {2016}

}

Abstract

We aim to localize objects in images using image-level supervision only. Previous approaches to this problem mainly focus on discriminative object regions and often fail to locate precise object boundaries. We address this problem by introducing two types of context-aware guidance models, additive and contrastive models, that leverage their surrounding context regions to improve localization. The additive model encourages the predicted object region to be supported by its surrounding context region. The contrastive model encourages the predicted object region to be outstanding from its surrounding context region. Our approach benefits from the recent success of convolutional neural networks for object recognition and extends Fast R-CNN to weakly supervised object localization. Extensive experimental evaluation on the PASCAL VOC 2007 and 2012 benchmarks shows that our context-aware approach significantly improves weakly supervised localization and detection.

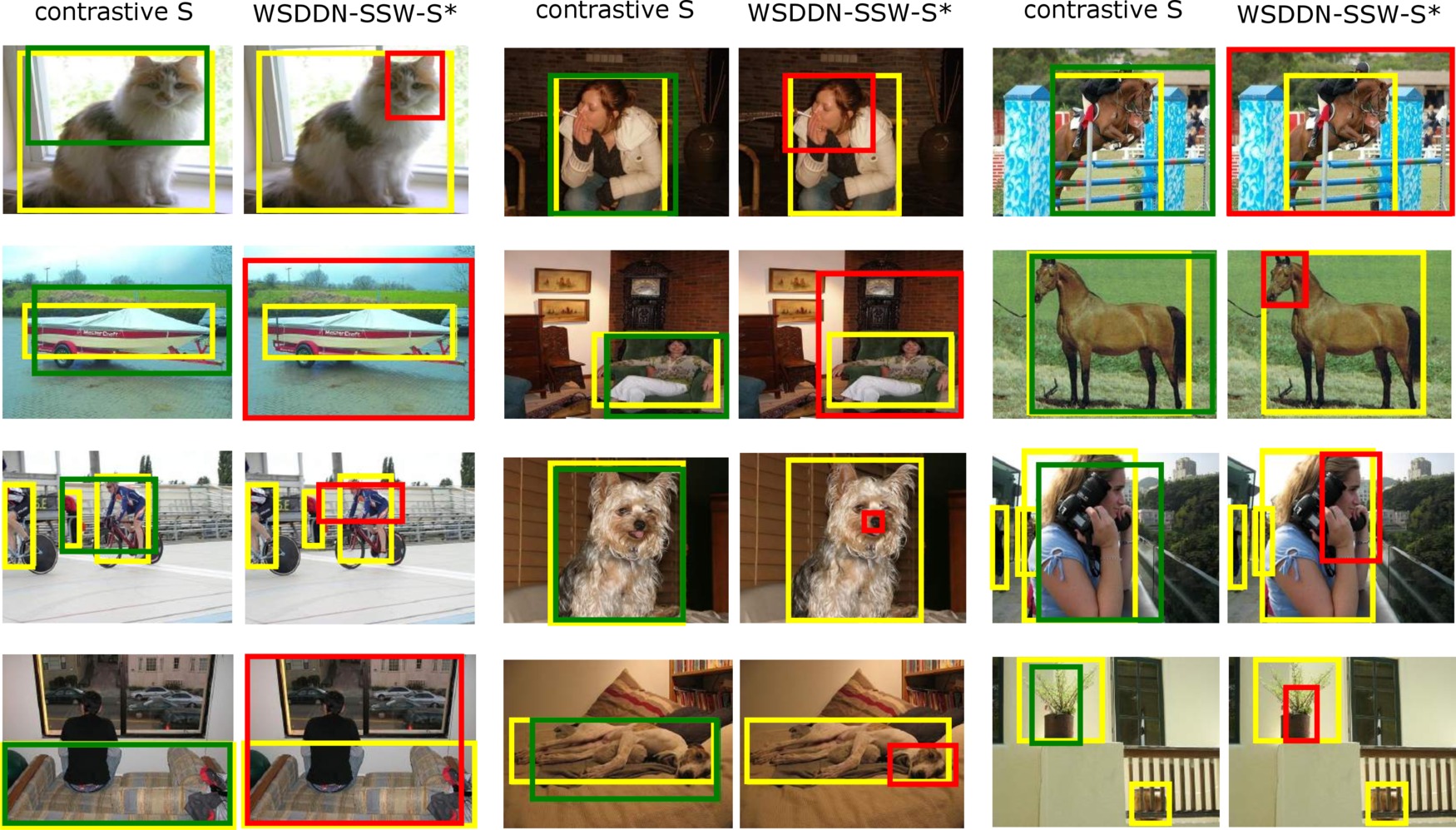

Detections

Acknowledgements

We greatly thank Hakan Bilen, Relja Arandjelović and Soumith Chintala for fruitful discussion and help. This work was supported by the ERC grants VideoWorld and Activia, and the MSR-INRIA laboratory.