SeaRnn : Training RNNs with Global-Local Losses

People

* Both authors contributed equally.Abstract

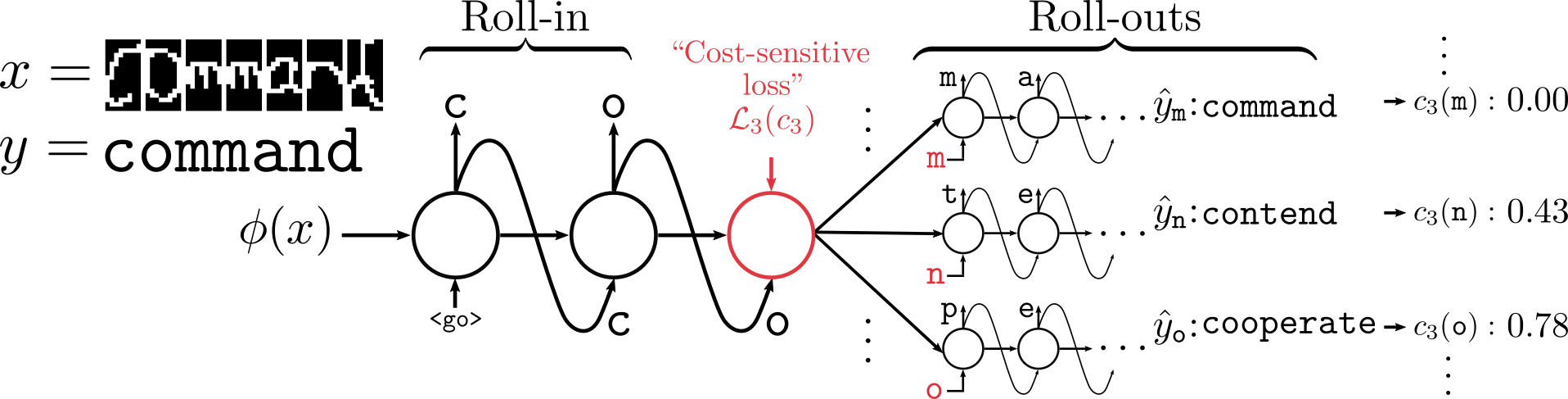

We propose SEARNN, a novel training algorithm for recurrent neural networks (RNNs) inspired by the "learning to search" (L2S) approach to structured prediction. RNNs have been widely successful in structured prediction applications such as machine translation or parsing, and are commonly trained using maximum likelihood estimation (MLE). Unfortunately, this training loss is not always an appropriate surrogate for the test error: by only maximizing the ground truth probability, it fails to exploit the wealth of information offered by structured losses. Further, it introduces discrepancies between training and predicting (such as exposure bias) that may hurt test performance. Instead, SEARNN leverages test-alike search space exploration to introduce global-local losses that are closer to the test error. We first demonstrate improved performance over MLE on two different tasks: OCR and spelling correction. Then, we propose a subsampling strategy to enable SEARNN to scale to large vocabulary sizes. This allows us to validate the benefits of our approach on a machine translation task.

Paper

ICLR 2018

Presented at DeepStruct 2017 (ICML workshop)

Presented at the MILA DLSS 2017

BibTeX

@inproceedings{searnn2018leblond,

author = {Leblond, R\'emi and Alayrac, Jean-Baptiste and Osokin, Anton and Lacoste-Julien, Simon},

title = {\textsc{SeaRnn}: Training RNNs with Global-Local Losses},

booktitle = {ICLR},

year = {2018}

}

Code

Acknowledgements

This work was partially supported by a Google Research Award.

Copyright Notice

The documents contained in these directories are included by the contributing authors as a means to ensure timely dissemination of scholarly and technical work on a non-commercial basis. Copyright and all rights therein are maintained by the authors or by other copyright holders, notwithstanding that they have offered their works here electronically. It is understood that all persons copying this information will adhere to the terms and constraints invoked by each author's copyright.